In this article, we want to discuss the efficient way to Optimize SQL Queries in SQL Server. Before we start, if you want to know how to use a cursor in SQL, please go through the following article: Using Cursor in SQL.

Why optimize a query?

Many of us feel the query that we wrote is final and once it works and provides the result then that query is the best. Query optimization is not only the technique to make your query fetch details or to execute many CRUD operations but also they are the main scope to make the end-user continue with your application.

It’s the same situation when we access some websites, and fetching data pulls most of your time, then you may prefer to use other websites that get you all results instantly. Optimization is not only to create impact technically but also from a business point of view has a huge impact.

How to Optimize SQL Queries?

Hope now you feel that optimizing a query is better and also there are a few things that you have to consider before writing a query or before deploying the same.

Step 1: Avoid using “*” in select query

We have the habit of always writing a query using “*” in select operations

|

1 2 3 |

SELECT * FROM Students [X] |

Where the select will fetch all the columns of the table, whenever there is a need for the column then use it, don’t fetch all the columns of the table. Always use column-specific names.

|

1 2 3 |

SELECT ID, Name FROM Students [√] |

Step 2: Avoid using Copy Paste of Code randomly

We have the habit of copying the code from existing code or online forums whenever required while copying please use only the code that exactly applies to the logic, reusing similar code, or copying unwanted lines which may not create an impact on your query but may take time for execution, so during code reusability always use only code that exactly required to fetch results for you.

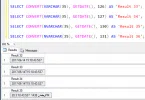

Step 3: Avoid using functions in Where Clause

When we have to execute any query there will be a conditional check using the where clause to filter data.

|

1 2 3 |

SELECT Name, DOB FROM Students WHERE DOB < getdate() [X] |

When we use any of the system-defined or user-defined functions in the where clause, then each time; i.e., each of the rows in the table will be checked against the function results which in turn execute the function each time. This will take more time and we all know the parameterless function will always return the same values. Better assign the function value to a variable and then use it in a where clause.

|

1 2 3 4 5 |

DECLARE @MyDate as date SET @MyDate = GetDate() SELECT Name, DOB FROM Students WHERE DOB < @MyDate [√] |

Step 4: Avoid using Joins between two types of columns

As all of you know Type Conversion during data manipulation is another place where a large amount of time will be consumed, when we are trying to join two types of columns then the other column has to be converted, and the values of the lower column have to be converted and this will take some time for converting for each of the row values.

|

1 2 3 4 5 6 |

SELECT Name FROM Student, Parents WHERE Student.SchoolFees = Parents.KidsFees [X] Student.SchoolFees - Int Value Parents.KidsFees - Float Value |

Step 5: Avoid using COUNT(*) from tables for getting row count

We usually use the COUNT(*) to get the number of rows available in a table.

Example:

|

1 2 3 |

SELECT COUNT(*) FROM Student [X]This count(*) makes the full scan of the table, whenever the code is executed. |

Example:

|

1 2 3 |

SELECT rows FROM Student WHERE id = OBJECT_ID('dbo.Orders') AND indid < 2 [√] |

Note: This query will not do a full scan of table rows, where if you need to check for any value for that condition available then this query may be useful, not always provide the exact count of rows.

Step 6: Avoid using DISTINCT when Join Tables

When we use the Distinct keyword it looks as if the query will be automatically optimized by the internal SQL engine, that is true when you use the Distinct for filtering operation when we use the distinct during the table join operations which has one too many relations then it’s not advisable.

Example:

|

1 2 3 |

SELECT DISTINCT s.Dept, d.Dept FROM Student s, Department d Where s.Dept = d.Dept [X] |

When we use the “Exists” query for fetching some operations during table join then it’s better to compare it to the Distinct compares.

Example:

|

1 2 3 |

SELECT d.Dept FROM Department d Where Exists ( SELECT ‘Y’ FROM Student s WHERE s.Dept = d.Dept ) [√] |

Step 7: Avoid Using Temp Tables

Using the temporary table is always an easy functionality for accessing the data values, but we should be careful about the right scenario to use the temp tables. When more than one table is joined and some conditional operations are compared and executed it’s not suggested to store the values in the temp table and access the large quantum of values.

It’s always suggested to use the “View”, where the View has more benefits in that the table operations are executed once the query can be fetched during execution and can be used optimized. Note: The temporary table may occupy the internal memory and that makes the process execution slow.

Step 8: Avoid Using Triggers

Trigger usage is an expensive process in SQL, so try to avoid firing and executing the triggers. Don’t use the triggers with any constraints and also avoid using the same triggers for multiple CRUD operations.

Step 9: Avoid Deadlocks during query executions

Deadlock handling is not an easy job for the larger transnational data volumes, when we handle quite large data processing with multiple processes then we should be clear on deadlock occurrence scenarios and try to avoid it. When you write any queries, views, functions, and store procedures always follow the same order of access to the tables.

Always try to break the operations in transactions into small blocks for easy understanding in troubleshooting. Larger transaction blocks may create deadlocks or dependencies and consume more operational and execution times.

Step 10: Avoid Using Locks during the reading process

The lock of the tables may affect the other process to get impact; there is an alternative query to use “WITH (NOLOCK)” which is equivalent to “READ UNCOMMITTED” at transaction isolation. This is also called a dirty read but this operation of using “With (NoLock)” can prevent the deadlock occurrences by multiple reads. Example:

|

1 2 3 |

SELECT Name, ID, Address FROM Student WITH(NOLOCK) [√] |

Hope this will help you to Optimize SQL Queries and help to reduce the execution time to some extent. Thank you.

Leave a Comment